데이터브릭스는 29일 그랜드 인터컨티넨탈 서울 파르나스 호텔에서 국내 첫 오프라인 기자 간담회를 개최해 데이터브릭스의 국내 전략에 대해 소개했다. 데이터브릭스가 국내에서 가속화 되고 있는 레이크하우스 시장 규모를 키운다.

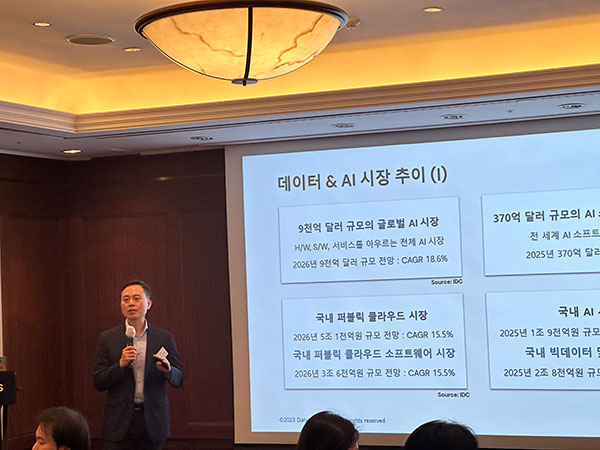

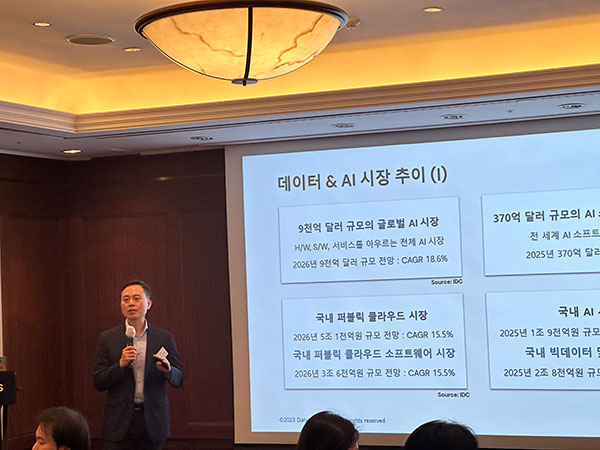

▲데이터브릭스 코리아 장정욱 대표

작년 국내 약 80% 성장·매출 10억불 달성

통합 데이터플랫폼…엔지니어링·BI·AI 지원

오픈소스 생성형 AI 모델 “LLM 보편화 기대”

퍼블릭 클라우드는 2026년까지 매년 15.5% 성장률을 기록하며, AI와 빅데이터 분석 시장도 빠르게 성장할 것으로 기대된다. 이러한 가운데, 데이터브릭스가 국내에서 가속화 되고 있는 레이크하우스 시장 규모를 키운다.

데이터브릭스는 29일 그랜드 인터컨티넨탈 서울 파르나스 호텔에서 국내 첫 오프라인 기자 간담회를 개최해 데이터브릭스의 국내 전략에 대해 소개했다.

데이터브릭스 코리아 장정욱 대표는 “개방성을 기반으로 데이터 엔지니어, 애널리스트, 사이언티스트들이 통합될 거버넌스 프레임워크에서 데이터를 관리할 수 있는 통합 환경을 지원해 업무 효율 향상에 기여하겠다”며, 특히 “올해 채용과 교육을 강화해 경쟁력 있는 비즈니스 파트너 생태계를 확장하고, 신뢰할 수 있는 데이터 레이크하우스 플랫폼으로 거듭나겠다”고 말했다.

데이터브릭스는 작년 4월 한국지사를 공식 오픈하고, 최근 장정욱 한국 초대 지사장을 선임해 국내 비즈니스 본격화를 알렸다. 가트너 선정 데이터 관리 영역과 ML 플랫폼의 리더로 동시에 채택된 유일 업체로, 현재 9천개 이상 고객사를 두고 있다.

AI를 통한 혁신과 효율 창출에 대한 관심이 증폭되는 가운데, 데이터 전략의 수립이 강조된다. 기술적인 혁신뿐만 아니라 실제 성과로 연결되는 ML 모델 발굴이 중요해졌고, 데이터의 품질과 신뢰성 확보, 데이터 프로세스의 속도 확보가 중요하다.

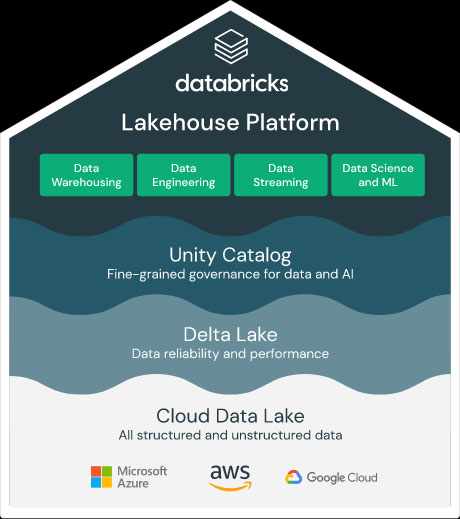

장 대표는 이와 같은 추세에 따라 “시스템이 유기적으로 통합된 플랫폼이 중요하다”며, 또한 “단일 클라우드 플랫폼이 아니라 멀티 클라우드 플랫폼과 오픈 소스 및 포맷 기반 개방형이 채택될 것”이라고 말했다.

데이터브릭스의 키워드는 단연 '데이터'다. 이는 데이터 중심 엔터프라이즈로서 고객 관리, 제품 개발, 직원 생산성, 비즈니스 운영 등 비즈니스의 모든 측면에서 데이터와 AI를 활용하겠다는 의미다.

데이터브릭스는 데이터 웨어하우스와 데이터 레이크를 결합해 데이터와 AI를 통합한 개방형 플랫폼 레이크하우스를 제공한다. 레이크하우스는 많은 데이터를 클라우드 기반으로 저장해 모든 데이터에 대한 엔지니어링, 비즈니스 인텔리전스(BI) 및 AI와 ML(머신러닝)을 모두 지원하는 개방형 통합 데이터 플랫폼이다.

데이터브릭스는 배치 또는 스트리밍 형태로 수집되는 대량의 정형 및 비정형 데이터를 처리하기 위한 기존의 복잡한 아키텍처를 단순화시킨다.

국내 이마트 24, 아모레퍼시픽, 지마켓, 볼보, 한화시스템, 무신사, 데브시스터즈 등 유통업체, 이커머스, 게임 산업에서 레이크하우스 플랫폼을 도입했다. 장 대표는 “지난해 2022년 매출이 10억 달러를 달성해 국내 약 80% 성장, 아태 지역은 90% 성장을 이뤘다”고 말했다.

장 대표는 “한국의 비즈니스 리더들이 데이터와 AI가 가진 가치를 인식하고, 이를 활용해 비즈니스 혁신을 추진하고 있는 중요한 시점에서 데이터브릭스는 올해 더 많은 조직들에게 효율적인 가치를 제공하는 통합 데이터 플랫폼이 될 것”이라고 주장했다.

마지막으로 장 대표는 “퍼블릭 클라우드를 기반으로 한 시장의 변화는 지속적으로 이어질 수밖에 없는 트렌드이고 변하지 않을 것”이며, “개방형을 기반으로 한다는 측면이 사업에서 얻는 가장 지속적인 차별화 포인트가 될 것이라고 생각한다”고 덧붙였다.

■ 챗GPT 보편화 위한 오픈소스 모델 ‘돌리’

이날 행사에서 데이터브릭스는 새로운 오픈소스 AI 모델 ‘돌리(Dolly)’를 소개했다.

데이터브릭스는 챗GPT-3는 매개변수가 1,750억개인데 반해 돌리는 60억개에 불과하지만, 한 대의 머신에서 단 3시간을 학습시키는 것만으로 챗GPT와 유사한 기능을 구현해 비용 효율적이라고 주장했다.

AI 기업의 데이터셋은 민감한 지적 재산으로서 제3자에게 전달되는 과정에서 민감하게 작용할 수 있다. 대부분의 머신러닝(ML) 사용자는 모델을 직접 소유하는 것이 장기적으로 봤을 때 이상적이라는 판단 하에 오픈소스의 중요성이 강조된다.

데이터브릭스는 “챗GPT와 같은 최신 모델의 질적 개선에 기여한 요인은 보다 규모가 크거나 더 미세 조정된 기반 모델이 아닌 명령어 추종 훈련 데이터”며, “돌리의 코드를 오픈소스로 공개해 극소수의 기업만이 구현 가능한 LLM이 아닌 모든 기업이 직접 맞춤화하고 사용할 수 있는 모델로서 보편화 되기를 기대한다”고 말했다.