AI 확산과 혁신이 빠르게 이뤄지고 있으나 이루다 논란 등 예상 못한 사회적 이슈와 우려가 대두되고 있다. 과학기술정보통신부는 인간 중심의 AI를 위한 신뢰할 수 있는 AI 실현전략을 발표했다. 이번 전략은 기술, 제도, 윤리 측면의 3대 전략과 10대 실행과제를 통해 2025년까지 단계적 추진된다.

과기정통부, 누구나 신뢰하고 누릴 수 있는

AI 구현을 위한 3대 전략 10대 실천과제 발표

오는 2025년까지 단계적 검토 및 추진 계획

AI가 산업과 사회에 빠르게 확산하며 혁신을 창출하고 있으나, AI 챗봇 이루다 논란(21.01.), 오바마 美 前 대통령 딥페이크 영상 사건(18.07.), MIT 사이코패스 AI 개발(18.06.) 등 예상 못 한 사회적 이슈와 우려도 함께 대두되고 있다.

과학기술정보통신부는 13일, 4차산업혁명위원회 제22차 전체 회의에서 인간 중심의 AI를 위한 ‘신뢰할 수 있는 AI 실현전략’을 발표했다. 이번 전략은 기술・제도・윤리 측면의 3대 전략과 10대 실행과제를 통해 2025년까지 단계적 추진된다.

최기영 과기정통부 장관은 “이루다 사건은 우리 사회가 AI의 신뢰성에 대해 어떻게 대처해야 하는지 많은 숙제를 안기는 계기가 되었다”라며, “기업과 연구자 등이 AI 제품과 서비스 개발 과정에서 혼란을 겪거나 이로 인해 국민이 피해 보지 않도록 AI 신뢰 확보 기준을 명확화할 것”이라 말했다. “중소기업 등이 신뢰성 기준 준수에 어려움이 없도록 지원 방안을 마련할 것”이라고도 덧붙였다.

[1] 신뢰 가능한 AI 구현 환경 조성

① AI 제품・서비스 구현단계별 신뢰 확보 체계 마련 - 민간에서 AI 제품・서비스를 구현하는 단계인 “개발-검증-인증”에 따라, 기업과 개발자, 제삼자 등이 신뢰성 구현을 위해 참조할 수 있는 신뢰 확보 기준과 방법론을 제시하고 지원한다. 이에 따라 개발 가이드북, 검증체계, 민간 자율 인증 등이 추진된다.

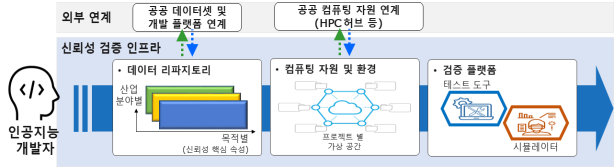

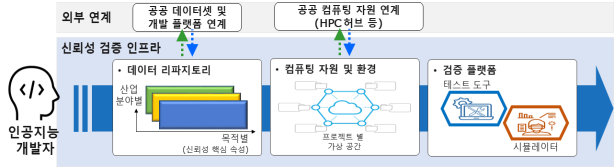

② 민간의 AI 신뢰성 확보 지원 - 기술・재정적 상황이 열악한 스타트업 등도 체계적으로 신뢰성을 확보해 나갈 수 있도록 AI 구현을 위한 “데이터 확보-알고리즘 학습-검증”을 통합 지원하는 플랫폼을 운영한다. 이에 따라 현재 학습용 데이터와 컴퓨팅 자원을 지원 중인 ‘AI 허브’ 플랫폼에 검증체계에 따른 신뢰 속성별 수준 분석, 실제 환경 테스트 등의 기능을 추가로 개발할 방침이다.

▲ “데이터 확보-알고리즘 학습-검증” 원스톱 지원

플랫폼 구성안 [그림=과기정통부]

③ AI 신뢰성 원천기술 개발 - 이미 구현된 시스템에 AI가 판단기준 등을 설명할 수 있는 기능을 추가하고, AI 스스로 법・제도・윤리적 편향성을 진단하고 제거할 수 있도록 AI 설명 가능성, 공정성, 견고성 제고 기술 개발을 추진한다. 내년부터 2026년까지 추진될 관련 사업 3개가 예비 타당성 조사를 통과한 상태다.

[2] 안전한 AI 활용을 위한 기반 마련

④ AI 학습용 데이터 신뢰성 제고 - 민관이 AI 학습용 데이터 제작공정에서 공통으로 준수해야 할 신뢰 확보 검증지표 등의 표준 기준을 민간과 함께 마련한다. 한편, 디지털 뉴딜로 추진되는 ‘데이터 댐’ 사업에서는 구축 전 과정에서 법·제도 준수 여부 등의 신뢰성 확보 고려사항을 선제 적용해 품질을 향상할 계획이다.

⑤ 고위험 AI 신뢰 확보 추진 - 국민의 안전이나 기본권에 잠재적 위험을 미칠 수 있는 고위험 AI의 범주를 설정하고, 서비스 제공 전에 해당 AI의 활용 여부를 이용자에게 ‘고지’하도록 할 계획이다. 고지 이후, 해당 AI 기반 서비스에 대한 ‘이용 거부’, AI의 판단 근거에 대한 ‘결과 설명’ 및 이에 대한 ‘이의제기’ 등의 제도화에 대해서는 글로벌 입법・제도화 동향, 산업적 파급력, 사회적 합의・수용성, 기술적 실현 가능성 등을 고려하여 종합적으로 중장기 검토할 계획이다.

⑥ AI 영향평가 실시 - AI가 국민 생활 전반에 미치는 영향을 종합・체계적으로 분석하고 대응하기 위해 ‘지능정보화기본법’ 제56조에 규정된 사회적 영향평가를 도입할 계획이다. 안전성, 투명성 등을 토대로 AI 영향력을 종합 분석하여 향후 AI 관련 정책이나 기술・관리적 조치방안 수립 시 활용할 계획이다.

⑦ AI 신뢰 높일 제도 개선 - 지난해 ‘AI 법・제도・규제 정비 로드맵’을 통해 발굴된 과제 중 AI 신뢰 확보, 이용자 생명 보호 등과 관련된 △업계 자율적 알고리즘 관리・감독환경 조성, △플랫폼 알고리즘 공정성・투명성 확보, △영업비밀 보장 위한 알고리즘 공개기준 마련, △고위험 기술기준 마련 등의 관련 제도를 개선한다.

[3] 사회 전반 건전한 AI 의식 확산

⑧ AI 윤리 교육 강화 - 사회·인문학적 관점과 윤리 기준의 사회 실천을 인식할 수 있는 내용을 담은 AI 윤리 교육 총론이 마련된다. 또한, 이를 토대로 연구·개발자, 일반시민 등 맞춤형 윤리 교육을 개발하고 실시할 계획이다.

⑨ 주체별 체크리스트 마련・배포 - AI 윤리 기준에 대한 구체적인 행위지침으로, 연구・개발자, 이용자 등이 업무, 일상생활 등의 속에서 윤리 준수 여부를 자율점검을 할 수 있는 체크리스트를 기술발전 양상을 반영하여 개발하고 보급한다.

⑩ 윤리 정책 플랫폼 운영 - 학계, 기업, 공공 등 다양한 사회 구성원이 참여하여 AI 윤리에 대해 토의하고, 의견 수렴 및 발전 방향을 논의하는 장을 마련한다.