AI 연산을 얼마나 빠르게 처리하는지가 기업의 경쟁력과 연결되는 시대가 도래하며 AI 처리에 최적화된 반도체 수요가 늘고 있다. AI 반도체는 직렬보다 병렬처리 방식이, 프로세서보다 메모리 중심의 설계가 적합하다. AI 반도체 기업이 성장하기 위해서는 글로벌 수준의 기술력 확보는 물론, AI 반도체를 사용하는 전방산업과의 의사소통이 중요하다.

AI 처리에 적합한 반도체 필요성 점차 증가

고속 병렬연산, 폰 노이만 구조로는 한계

PIM 반도체, 메모리 중심 컴퓨팅 등 필요해

데이터 활용의 가치가 올라가며 고속의 데이터 처리 및 연산 능력 수요가 커지고 있다. 시장조사기관 IDC가 2018년에 발표한 ‘데이터 시대(Data Age) 2025’ 백서에 따르면, 전 세계 데이터 총 규모가 2025년에 175 ZB(제타바이트)로 연평균 61%씩 증가할 것으로 예측했다.

▲ AI 기술이 거의 모든 산업에서 활용되면서

방대한 데이터를 보다 효과적으로 생산, 수집, 활용하는

AI 반도체 수요가 증가하고 있다 [이미지=픽사베이]

IDC는 같은 시기에 IoT 디바이스가 전체 데이터의 50% 이상을 생산할 것이며, 퍼블릭 클라우드에 전체 데이터의 50% 정도가 저장되고 처리되며, 자율주행차량 등에서 전체 데이터의 30% 정도가 실시간 소비될 것으로 전망했다.

ETRI 지능화정책연구실은 1일, ‘ETRI AI 실행전략 2: AI 반도체 및 컴퓨팅시스템 기술경쟁력 강화’ 백서를 통해 “데이터 유형과 활용방식이 다변화하고 있다”면서 “이에 대응하기 위해 △클라우드 컴퓨팅, △에지 컴퓨팅, △디바이스로 이어지는 데이터 처리 사슬 상의 데이터 처리와 연산의 고도화가 필요하다”라고 강조했다.

AI 처리와 연산에 최적화된 반도체가 필요한 이유

기존 반도체 및 컴퓨팅 기술로 데이터 처리와 연산의 고도화를 달성하기에는 여러모로 무리가 따른다. 백서는 반도체 및 컴퓨팅 기술이 2가지의 구조적 한계를 지니고 있다고 짚었다.

첫 번째는 무어의 법칙(Moore’s Law)으로 대변되는 반도체 집적의 물리적 한계다. 트랜지스터 집적도가 높아질수록 발열과 소자 간 간섭 등의 한계를 극복하기 어렵다는 것이다.

두 번째는 기존 컴퓨터 시스템 구조의 근간인 폰 노이만(Von-Neumann) 방식이 AI 처리 등에 적합한 고속 병렬연산에 효율적이지 못하다는 점이다. 주기억장치, 중앙처리장치, 입출력장치로 이어지는 직렬처리가 기본 골격인 폰 노이만 구조는 고속 병렬연산 수행 시 데이터 병목 문제를 일으킨다.

병렬처리는 프로세서와 저장장치 간, 그리고 각 저장장치 계층 간의 데이터 이동이 필수적이다. 이러한 이동이 많아질수록 CPU 처리속도가 아닌 데이터 이동속도가 컴퓨팅 성능과 에너지 소비에 영향을 미친다. 백서는 이를 해결하려면 고성능 컴퓨팅 기술이 필요하며, 장기적으로는 변혁적 방식의 컴퓨팅 시스템 소재, 구조, 계산모델 등이 필요하다고 설명했다.

기술발전 측면과 아울러 시장 및 산업 경쟁력 차원에서도 AI 반도체 및 컴퓨팅 기술의 중요성은 날마다 커지고 있다. 각종 산업에 AI 기술이 빠르게 응용되고 있기 때문이다. 안정적, 효율적 AI 구현은 반도체 및 컴퓨팅 기술에 달렸으며, 이는 산업의 발전, 나아가 국가 경쟁력 상승으로 이어진다.

AI 가속기 vs PIM 반도체

AI 반도체는 고속 병렬연산 등 AI 데이터 처리에 최적화된 반도체다. 현재의 시스템 반도체는 AI 구현에 있어 전력 소모 등 효율이 떨어져 전용 반도체가 필요하다. 백서는 위의 두 문제점을 해결하기 위해 두 방향의 연구가 진행되고 있다고 짚었다.

첫 번째 방향은 AI 알고리즘 처리에 특화된 연산패턴을 지원하는 AI 가속기, 즉 AI 전용 프로세서 개발이다. AI 전용 프로세서는 프로세서와 메모리 사이에 병렬화된 회로를 구현하여 병렬처리 성능과 지연시간, 전력효율을 높이며, 용도에 따라 학습형, 추론형으로 구분된다.

▲ 다량의 코어를 탑재한 GPU는 소량의 코어를 탑재한

CPU보다 고속 병렬연산에 유리하다 [그림=엔비디아]

AI 전용 프로세서 시장은 엔비디아가 독주하고 있다. 엔비디아 GPU는 4대 클라우드 서비스(아마존, 마이크로소프트, 구글, 알리바바)에 사용되는 AI 가속기의 97%를 점유하고 있다. 구글은 2016년부터 AI 알고리즘 전용 가속 구조를 채택한 TPU(Tensor Processing Unit)를 개발, 100PF(페타플롭스) 성능의 TPU를 자사 클라우드에 적용하며 엔비디아에 대응하고 있다.

두 번째 방향은 메모리와 프로세서를 같은 칩에 패키징하는 HBM(High Bandwidth Memory) 기반 PIM(Processing in Memory) 프로세서 개발이다.

HBM 기반 PIM 프로세서는 프로세서가 메모리칩 혹은 적층 메모리 셀에 같이 패키징돼 메모리부터 프로세서까지의 데이터 이동 비효율성을 극복한다. 이에 따라 CPU와 메모리 간 대역폭 차이에 따른 병목 문제와 데이터 이동에 따른 에너지 소모를 줄일 수 있다. 삼성전자와 SK하이닉스는 HBM 개발에, 마이크론은 HMC(Hybrid Memory Cube) 기술에 집중하고 있다.

메모리 중심 컴퓨팅, AI 처리에 적합한 컴퓨팅 구조

AI 컴퓨팅 시스템은 대용량 데이터를 초고속으로 생산하고, 처리하며, 활용하는 고성능 컴퓨터로 백서는 정의했다. AI 기술은 높은 정확도를 구현할수록 더 많고, 더 높은 해상도의 학습 데이터를 요구하므로 기하급수적인 계산량 증가를 수반한다.

폰 노이만 구조의 프로세서 중심 컴퓨팅은 대용량 데이터 처리 시 CPU 처리성능보다 데이터 이동속도의 영향을 더욱 받기 때문에 AI 응용과 같은 대규모 병렬처리에 적합하지 않다.

슈퍼컴퓨터 대부분은 에너지 효율성이 낮다. 백서는 미국 1위 슈퍼컴퓨터 서밋(Summit)을 예로 들었다. 서밋은 초당 148,000조 회의 연산을 수행하며 최대 13MW 전력을 소비하는데, 이는 미국 가정 8,000세대의 조명을 동시에 켤 수 있는 전력량에 해당한다.

▲ 미국 1위 슈퍼컴퓨터 서밋은 8천 세대의 조명을

동시에 켤 수 있는 전력을 소비한다 [사진=Carlos Jones]

최근 고성능 컴퓨팅 연구는 에너지 효율성 문제를 극복하기 위해 메모리 중심 컴퓨팅의 방식을 채택하고 있다. 메모리 중심 컴퓨팅이란 프로세서 간 데이터 이동을 최소화하기 위해 컴퓨터 구조를 프로세서가 아닌 메모리 중심으로 재편하는 컴퓨팅 모델이다.

다수의 메모리 노드를 고속의 패브릭(Fabric)으로 연결하여 거대한 공유메모리 풀(Pool)을 구성하고, 이를 통해 복수의 컴퓨팅 노드가 각자 데이터를 병렬적으로 처리하게 하여 정보처리 성능을 높인 것이 특징이다.

백서는 메모리 중심 컴퓨팅의 근간인 고속 인터커넥트 및 비휘발성 메모리 기술과 함께 이를 활용하는 컴퓨팅 구조, 시스템 SW 등의 기술개발과 생태계 조성을 위한 기업 간의 합종연횡이 활발히 진행되고 있다고 밝혔다.

2016년부터 메모리 중심 컴퓨팅을 위한 차세대 연결망의 산업 표준을 위해 AMD, 자일링스를 중심으로 한 ‘CCIX(Cache Coherent Interconnect for Accelerators)’, 델 EMC, HPE를 중심으로 한 ‘Gen-Z’, IBM을 중심으로 한 ‘Open CAPI’ 컨소시엄이 구성되어 활동하고 있다.

2019년 3월에는 인텔이 ‘CXL(Compute Express Link)’ 컨소시엄을 발족하여 고대역폭에서 가속기와 CPU 간의 메모리 공유를 지원하는 인터커넥트 기술인 CXL 1.0을 발표했다.

인간의 뇌를 모사한 반도체, 뉴로모픽

뉴로모픽(Neuromorphic) 반도체는 생물학적 뇌 내에서 기능하는 뉴런-시냅스 구조를 모사하는 SNN(Spiking Neural Network) 기술을 사용한 非 폰 노이만 방식의 반도체다. 코어에는 트랜지스터와 메모리를 비롯한 몇 가지의 전자 소자들이 탑재되어 있으며, 코어 일부 소자는 뇌의 뉴런 역할을, 메모리 반도체는 뉴런들을 잇는 시냅스 역할을 담당한다.

저전력으로 많은 양의 데이터를 처리할 수 있는 뉴로모픽 반도체는 집적용량이 높아서 인간의 뇌처럼 학습할 수 있고, 연산 성능이 높다. 기존 딥러닝 방식과 성능이 유사하며, 전력효율도 높다. 따라서 제한된 전력 자원을 갖는 모바일 시스템에 적합하다.

그러나 소프트웨어 기반 뉴로모픽 시스템은 뉴런과 시냅스 기능을 수식적으로 정의하고, 실제 연산은 기존 컴퓨터 시스템으로 진행하기 때문에 궁극적으로는 성능, 학습 시간, 소비전력 면에서 한계를 보인다. 이를 해결하기 위해서는 하드웨어 기반 뉴로모픽 반도체가 필요하다.

인간 뇌를 모사한 뉴로모픽 반도체 연구는 유럽과 미국을 중심으로 2000년대 중반부터 국가 주도 R&D 사업으로 진행되고 있다. EU는 2013년부터 10억 유로를 투자하여 HBP(Human Brain Project)라는 인간 두뇌에 관한 대규모 원천연구 프로젝트를 진행하고 있으며, 미국 역시 BRAIN 이니셔티브를 2013년부터 수립하여 광범위한 기술개발을 추진 중이다.

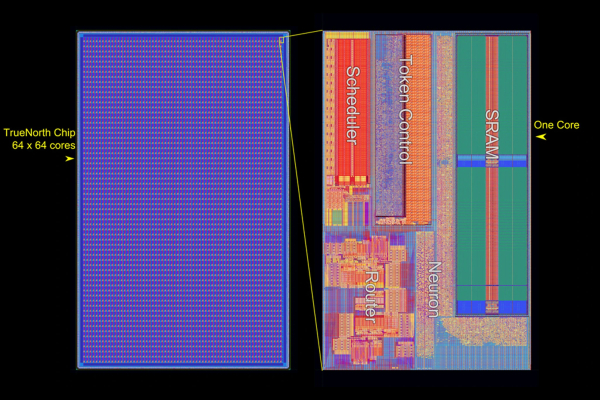

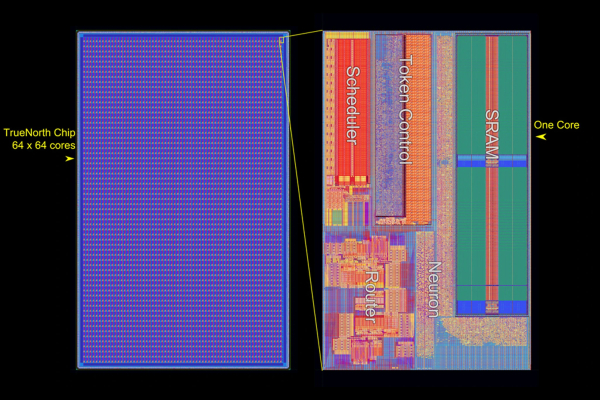

▲ 트루노스 칩의 레이아웃 [사진=IBM]

IBM은 2008년부터 美 국방부 산하 DARPA가 주도하는 시냅스(SyNAPSE) 프로젝트에 참여하여, 2014년 ‘트루노스(TrueNorth)’라는 뉴로모픽 칩을 개발했다. 이 칩은 기존 프로세서의 1/10,000 수준의 전력소모량으로 초당 1,200~2,600프레임의 이미지 분류가 가능하다.

2012년, 영국 맨체스터 대학이 중심이 되어 개발한 ‘스피나커(SpiNNaker)’는 스파이크 신경망을 실시간으로 모델링할 수 있도록 대규모 병렬처리 뉴로모픽 컴퓨터로 10억 개 뉴런의 시뮬레이션이 가능하다. 인텔은 2019년, 학습이 가능한 스파이킹 뉴로모픽 칩인 ‘로이히(Loihi)’를 발표했다. 이는 칩은 DNN(Deep Neural Network) 대비 100만 배 빠른 학습 및 실행 성능을 증명했다.

AI 반도체, AI 활용하는 산업계와 의사소통 필수적

AI 연산을 얼마나 빠르게 하느냐가 기업의 경쟁력과 연결되는 시대가 도래했다. 이에 따라 AI 처리에 최적화된 반도체 수요가 늘고 있다.

업계에선 데이터를 직렬적으로 처리하는 것보다 병렬적으로 처리하는 것이 AI 처리를 보다 효과적으로 수행하는 방안으로 보고 있다. 이에 따라 주요 기업과 기관은 적은 코어로 고도의 연산을 수행하는 프로세서보다, 많은 코어로 다수의 연산을 수행하는 프로세서를 개발하는 데 집중하고 있다.

또한, 데이터가 저장되는 곳과 처리되는 곳을 최대한 가깝게 배치하여 데이터 이동을 간소화하는, 메모리 중심의 반도체 및 컴퓨팅 구조를 개발하기 위해 노력하고 있다. 아예 인간의 뇌를 모사한 뉴로모픽 반도체의 개발도 이어지고 있으며, 기존 컴퓨팅의 단위를 뛰어넘는 양자 컴퓨팅 관련 연구도 세계 각국에서 행해지고 있다.

우리 정부도 AI 시대 기술 경쟁력을 확보하기 위해 최근 ‘인공지능 국가전략(19.12)’, ‘AI 반도체 산업발전 전략(20.10)’, ‘국가 슈퍼컴퓨팅 선도 사업(20.03)’, ‘양자컴퓨팅 기술개발사업 추진계획(19.01)’ 등 이 분야에 대한 투자와 정책을 늘려가고 있다.

퓨리오사 AI의 백준호 CEO는 지난 11월, ‘Arm 데브서밋(DevSummit) 2020’ 기조연설에서 “AI 반도체 기업이 성장하기 위해서는 기업 자체적으로는 글로벌 수준의 AI 반도체와 해당 반도체에 올라가는 소프트웨어 스택을 확보해야 한다”라고 말했다.

그러면서 “AI 반도체를 사용하는 산업계의 지원도 필요하다”라고 덧붙였다. AI 처리에 최적화된 반도체를 넘어 제조용 AI, 금융용 AI, 차량용 AI, 바이오용 AI 등에 최적화된, 수요자 중심의 AI 반도체를 만들어야 한다는 것이다.

AI 반도체 산업은 지식재산(IP)과 전문적 설계역량이 중요한 기술집약적 산업이나 아직 지배적 강자가 없는 초기 시장이다. 시장 선점을 위한 다양한 업계의 노력은 물론, 업계간 소통을 위한 정부의 지원이 필요한 시점이다.