전 세계 스마트폰 사용자의 숫자가 전 세계 인구의 3분의 2에 이르렀다. 각각의 사용자가 원하는 최선의 경험을 선사하고자 스마트폰 제조사는 스마트폰 카메라 기술 발달에 몰두해왔다. 센서 크기의 물리적인 한계를 극복하고자 다양한 기술들이 개발되었고, 이로 인해 발달한 이미지 센서는 스마트폰을 넘어서 자율주행차와 IoT 같은 첨단 산업에서도 제 역할을 해내고 있다.

휴대폰 카메라 픽셀, 18년 동안 364배 증가

스마트폰 발달로 이미지 센서 함께 성장

삼성, ISOCELL로 이미지 센서 강화

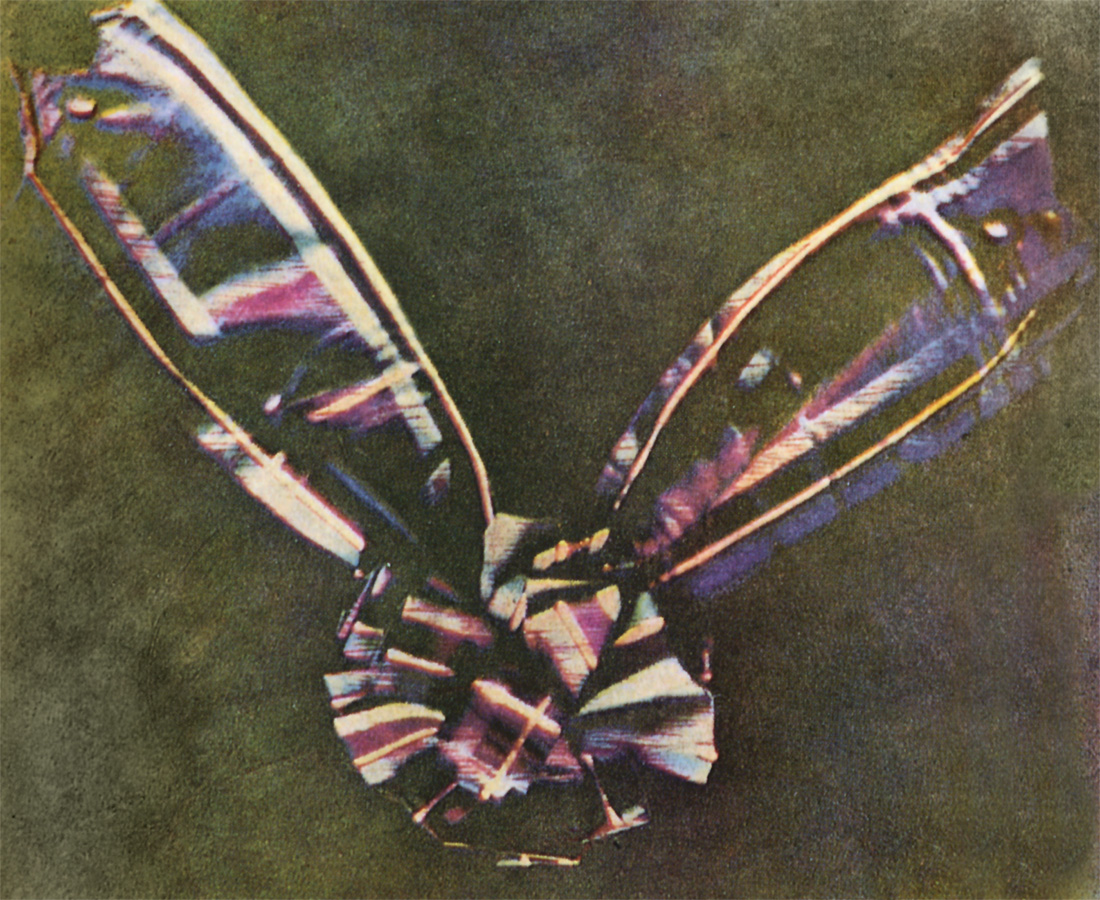

전자기학의 아버지 '맥스웰'이 찍은 인류 최초의 컬러 사진

제임스 맥스웰이 인류 역사상 처음으로 컬러 사진을 찍은 1861년 이후, 카메라 기술은 끊임없이 진보했다. 그리고 2000년대 들어 CMOS 이미지 센서를 탑재한 디지털 카메라와 휴대폰의 등장으로 카메라 기술은 그 전보다 훨씬 더 많은 발전을 이뤘다.

높은 스마트폰 보급율, 이미지 센서 발달로 이어지다

18년의 차이, 교세라 VP-210와 화웨이 P20 Pro

1999년에 출시된 교세라의 ‘VP-210’은 최초로 카메라를 탑재한 휴대폰이다. VP-210의 카메라는 11만 픽셀이었다. 2018년에 출시된 화웨이의 플래그십 스마트폰 ‘P20 Pro’는 4000만 픽셀, 2000만 픽셀, 800만 픽셀 트리플 후면 카메라에 2400만 픽셀 전면 카메라를 탑재했다. 18년 사이 휴대폰에 카메라는 3개가 더 들어갔고 최대 픽셀 개수는 364배 증가했다.

스마트폰은 특히 카메라 기술의 발전에 크게 기여하고 있다. 전세계 인구의 3분의 2가 스마트폰을 사용하며, 그 모든 스마트폰에는 카메라가 탑재되어 있기 때문이다. 인공지능과 딥 러닝 적용, 5G 표준 대응, 지문인식 및 플렉시블 디스플레이 도입 등 다양한 스마트폰 이슈가 산재한 가운데, 카메라는 여전히 수많은 스마트폰 제조사의 주 관심대상이다.

각종 이미지 센싱 기술이 개발되는 이유

스마트폰 상향 평준화 시대가 도래하면서 스마트폰 제조사는 카메라를 자사 브랜드의 특화점으로 삼고 기술개발에 매진하고 있다. 삼성전자는 고속화, 슈퍼 슬로 모션을 내세우고 있으며 애플은 빅 픽셀, 화웨이는 트리플 카메라로 시장을 공략하고 있다. 그런데 스마트폰 제조사는 카메라 픽셀 개수만 늘리면 다 끝날 것 같은 일을 왜 자꾸 다른 기술을 개발해서 해결하려는 걸까?

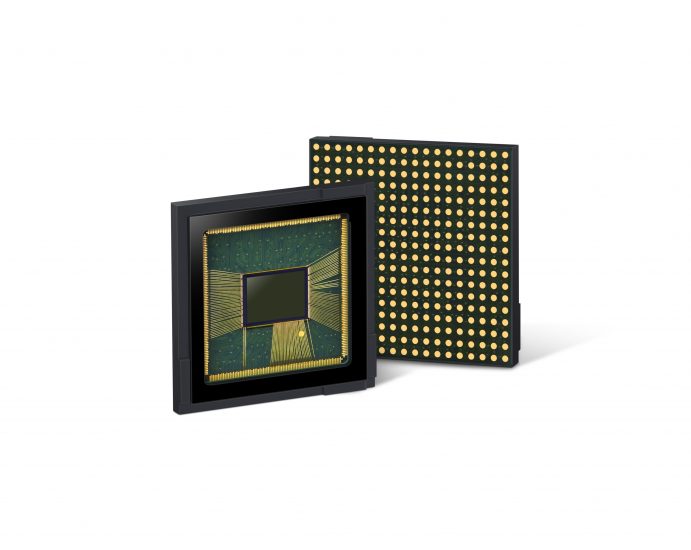

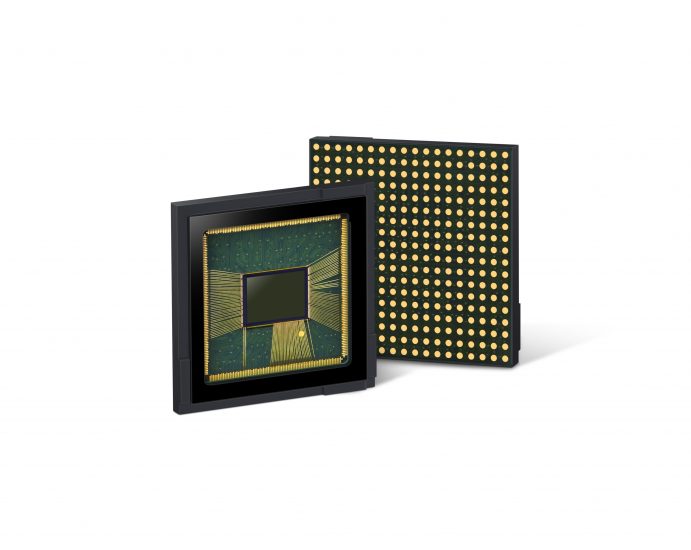

삼성전자 CMOS 이미지 센서 'ISOCELL Fast 2L9'

카메라 픽셀 개수는 물리적 한계 때문에 무작정 늘릴 수 없다. 0.25” 정도 크기 이미지 센서의 픽셀 개수를 늘리려면 픽셀의 크기를 줄여야 한다. 그런데 픽셀의 크기가 작으면 충분한 양의 빛을 흡수할 수 없다. 이미지 센서 제조사는 빛을 받아들이는 수광부를 센서 가장 윗부분으로 옮겨 픽셀의 수광율을 높여왔지만, 이마저 최근에는 한계에 봉착했다. 그래서 다른 기술로 카메라 성능을 보완하는 방향으로 바꾼 것이다. 사진의 화질은 픽셀의 개수가 아닌 픽셀의 질이 좌우한다.

무엇보다 가장 큰 이유는 스마트폰 카메라 이용 실태다. 대부분의 사람들이 스마트폰 카메라로 촬영하는 것은 카페 풍경이나 음식 사진이다. 주로 빛이 부족한 어두운 실내에서 찍는다. 빛이 부족하면 카메라는 빛 민감도, 즉 ISO를 높여 빛을 최대한 받으려고 한다. 픽셀이 빛을 민감하게 받을 수록 옆 픽셀에 영향을 주기 때문에 사진에 노이즈가 낀다. 이는 픽셀 개수를 늘려서 해결할 수 있는 문제가 아니다.

삼성전자 '갤럭시 S9'

그 밖에도 다른 이유가 있다. 스마트폰 디스플레이가 갈수록 커지고 있다. 삼성전자가 2010년에 출시한 갤럭시 S는 4” 디스플레이를 탑재했으며, 본체 대비 디스플레이 면적(Screen to Body, STB) 비율은 57.88 %다. 2018년에 출시한 갤럭시 S9는 5.8” 디스플레이를 탑재했으며, STB 비율은 84.36 %에 달했다. 스마트폰 초창기부터 지금까지 대부분의 소비자는 베젤리스 디자인을 선호했다. 베젤리스 디자인을 구현하기 위해선 디스플레이 면적을 키우는 대신 본체 크기는 최대한 억제해야 한다. 또한, 최근 셀카족의 증가로 전면 카메라의 성능이 높아지고 있다. 스마트폰 제조사는 이제 한정된 본체 크기에서 디스플레이 면적을 키우고 전면 카메라의 성능을 높여야 한다.

애플 '아이폰 X' 노치 디자인

애플이 2017년에 공개한 아이폰 X는 디스플레이 면적을 키우는 동시에 얼굴인식 카메라 모듈을 탑재하기 위해 디스플레이 상단이 골짜기처럼 패인 이른바 노치(Notch) 디자인을 선보였다. M자 탈모라는 비웃음은 잠시, 삼성전자를 제외한 전세계 스마트폰 제조사 거의 대부분이 자사의 스마트폰에 노치 디자인을 채용했다. 업계에서는 2019년부터 좁은 노치, 홀 인 액티브, 팝업 디자인을 채용한 스마트폰이 대대적으로 출시될 것으로 전망한다.

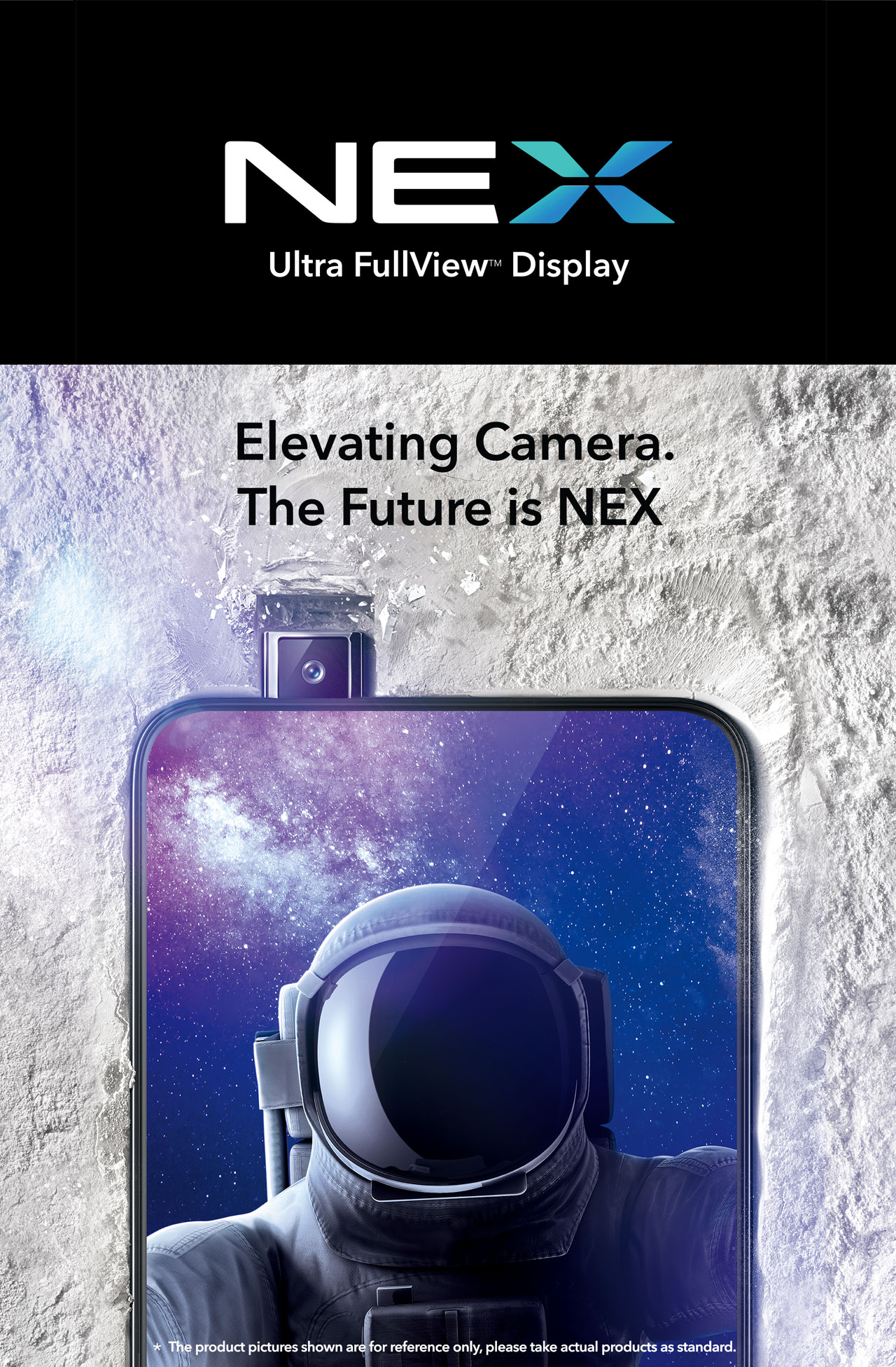

vivo 'NEX'

이미 중국 vivo의 ‘NEX’는 팝업 디자인을 적용해 STB 비율 91.24%를 달성했고, OPPO의 Find X는 슬라이드 팝업 디자인으로 STB 비율 93.8%을 달성했다.

삼성전자의 픽셀 강화 전략

전세계 소형 카메라 모듈 시장을 주도하는 회사는 삼성전자와 소니다. 그중 삼성전자의 CMOS 이미지 센서 기술 키워드는 3가지다. 아이소셀(ISOCELL), 3-Stack, 사용자 경험(User Experience, UX) 증대다.

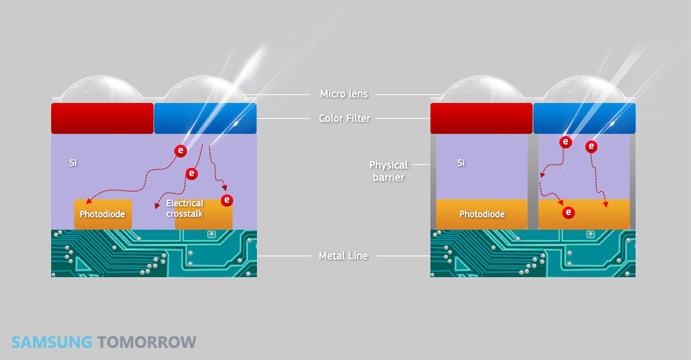

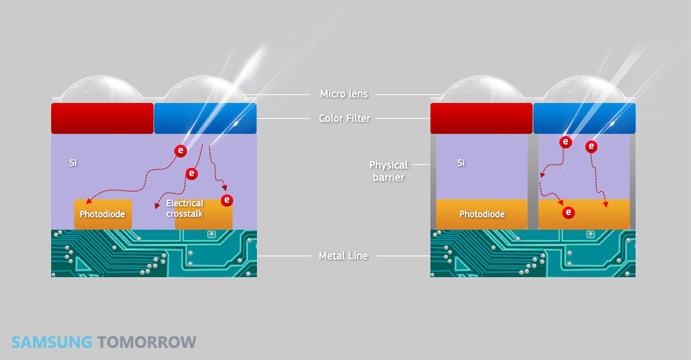

아이소셀 기술 모식도

아이소셀은 픽셀과 픽셀 사이에 절연부를 형성해 인접한 픽셀들을 서로 격리시키는 구조로 이루어져 있다. 즉, 이미지 센서를 구성하고 있는 수백만 개의 픽셀 각각의 테두리에 물리적인 벽을 세워 픽셀로 들어온 빛이 밖으로 나가지 않도록 하는 것이다. 1.0㎛² 초소형 픽셀에서도 색 재현성을 높인 것이 특징으로, 이미지 센서 모듈 두께도 기존 6.5㎜(1.12㎛² 픽셀)에서 5㎜(1.10㎛² 픽셀)로 대폭 줄일 수 있다.

아이소셀에 접목된 테트라 셀 기술

삼성전자는 아이소셀에 테트라 셀(Tetra Cell) 기술을 접목했다. 테트라 셀은 빛이 많을 때는 1픽셀, 빛이 적을 때는 4픽셀로 동작하는 기술이다. 즉, 낮에는 4천만 픽셀, 밤에는 1천만 픽셀로 동작한다. 즉 픽셀을 임시로 인접 픽셀끼리 합치면서 크기를 임의적으로 키운 것이다. 이렇게 저조도 환경에서 픽셀을 모으는 테트라 셀 기술은 향후 9픽셀, 16픽셀을 모으는 쪽으로 보완되고 발전할 전망이다.

또 아이소셀 밑에는 2개의 포토다이오드가 존재해 자동 초점, 즉 오토 포커스 기능을 향상했다. 각각의 포토다이오드와 촬영 대상은 삼각형을 이루고, 프로세서는 삼각법을 통해 대상의 위치를 특정하여 자동으로 초점을 맞춘다.

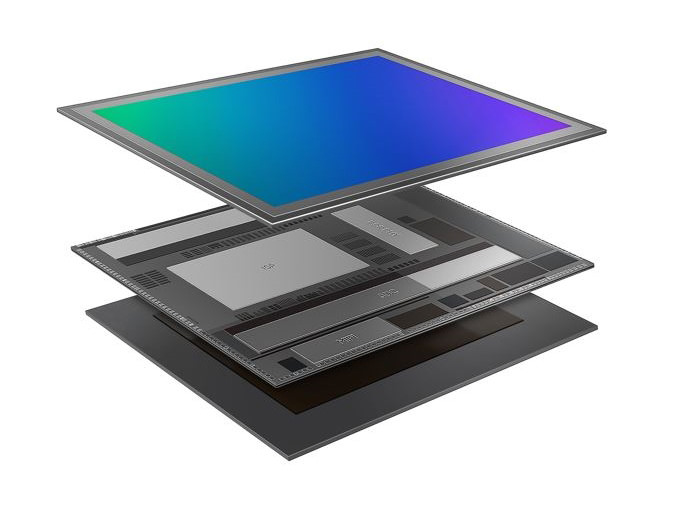

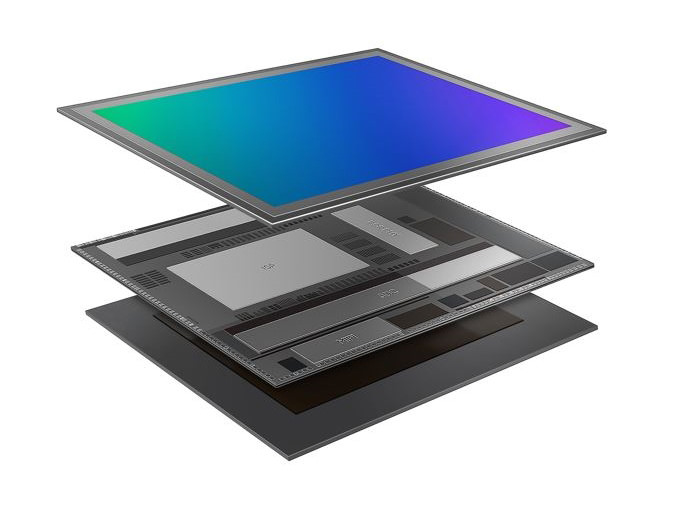

센서와 로직, D램을 3단으로 적층한 'ISOCELL Fast 2L3' 이미지 센서 구조

센서와 로직, D램을 3단으로 적층한 'ISOCELL Fast 2L3' 이미지 센서 구조

삼성전자는 자사의 주력 상품인 D램과 카메라 모듈을 통합하는 3-Stack 기술을 활용하고 있다. CMOS 이미지 센서의 이미지 정보를 모바일 AP가 가공하고 활용하기에는 여러모로 한계가 있다. 그래서 삼성전자는 이미지 센서와 이미지 시그널 프로세서, D램을 실리콘 관통전극(Through Silicon Via, TSV) 기술로 연결해 3층으로 쌓았다. 이미지 정보는 모바일 AP까지 가지 않고 D램 선에서 처리한다. 갤럭시 S9의 슈퍼 슬로 모션 기능은 바로 이 기술을 사용한 것이다. 방대한 프레임 데이터를 고속으로 저장하여 대상의 모든 움직임을 포착한다.

혼자가 아닌 둘이니까

듀얼 카메라는 이미지 센서의 크기를 극복하는 대표적인 기술로, 듀얼 카메라를 최초로 적용한 휴대폰은 2009년 삼성전자가 출시한 'SCH-B710'다. 이젠 보편화되어 두루 쓰이는 듀얼 카메라 기술은 싱글 카메라의 역할을 분산, 통합하여 기존에는 할 수 없었던 기능을 구현한다. 크게 3가지 조합이 있다.

삼성전자 '갤럭시 노트 9' 후면 듀얼 카메라

하나는 광각 카메라와 협각 카메라로 듀얼 카메라를 구성하는 것이다. 이를 통해 줌 기능을 구현할 수 있다. 가까운 것을 찍을 때는 광각 카메라만, 먼 것을 찍을 때는 협각 카메라만 사용하는 것이다.

다른 하나는 초점을 잡는 카메라와 초첨을 잡지 않는 카메라로 역할을 나누는 것이다. 한 카메라는 초점을 잡은 이미지를, 다른 카메라는 초점을 잡지 않은 이미지를 산출한다. 프로세서가 두 이미지를 합성하면 특정 대상에만 초점이 맞고 나머지는 흐릿한 배경 흐림, 이른바 보케 기능을 구현할 수 있다.

나머지 하나는 RGB 카메라와 흑백 카메라로 듀얼 카메라를 구성하는 것이다. RGB 카메라로 색을, 흑백 카메라로 조도를 파악하고 두 카메라가 산출한 이미지를 합성하면 저조도 상황에서 찍은 사진의 화질을 향상할 수 있다.

듀얼 카메라 기술은 트리플 카메라, 더 나아가 쿼드러플 카메라 기술로 발전할 수 있다.

위의 기술들을 통해 사용자는 5G 시대에 걸맞는 고화질 콘텐츠를 손쉽게 제작할 수 있다. 아늑한 분위기의 레스토랑에서 주문한 음식을 맛깔나게 찍어 인스타그램에 올리고, 스포츠 선수의 인상깊은 움직임을 슬로 모션으로 남길 수 있다.

스마트폰을 넘어서는 이미지 센서 활용

“최대한 여기저기에 카메라 모듈이 들어갔으면 좋겠다. 그래야 우리 매출이 오르니까.” 11일 열린 ‘제4회 첨단센서 2025 포럼’에서 기조연설을 맡은 삼성전자 S.LSI 사업부 이제석 상무의 말에 객석에선 웃음이 터져 나왔다. 스마트폰 분야에서 촉발된 카메라 모듈의 급속한 발전은 다양한 분야에서 카메라 모듈을 활용할 여지를 남겨주었다.

다양한 각도를 비추는 여러 카메라 모듈은 인공지능, 딥 러닝 등과 결합하여 기존에는 불가능했던 여러 기능을 가능케한다. 인공지능은 여러 카메라 모듈이 보내오는 이미지 정보를 복합적으로 습득하고 딥 러닝을 통해 처리한다. 2차원 이미지를 통해 3차원 정보를 구현하는 것이다. 3차원 정보를 통해 디바이스는 사물의 위치를 특정하고 동작을 포착할 수 있다.

카메라 모듈은 인간의 눈 이상의 역할을 해내려고 한다. 인간의 눈은 현존하는 그 어떤 이미지 센서도 따라가지 못하는 초고성능 이미지 센서다. 인간의 눈은 5억7천6백만 픽셀로 빛을 받아들인다. 눈이 받아들인 시각 정보는 뇌를 통해 실시간으로 처리된다. 또한, 2개의 눈은 각각의 눈에 빛이 맺히는 미세한 시간차와 위치차를 바탕으로 대상의 위치를 가늠한다. 저조도 환경에서는 동공을 넓혀 사물을 분간한다.

그럼에도 카메라 모듈은 인간의 눈이 볼 수 없는 것들을 보고 판단 및 처리할 수 있다. 자외선과 적외선을 보고, 2개 이상의 카메라 모듈로 다각적인 정보 취합이 가능하다. 가령 IoT 디바이스에 탑재된 이미지 센서는 사용자의 동작을 분석해 사용자가 원하는 IoT 디바이스 기능을 실행할 수 있다.

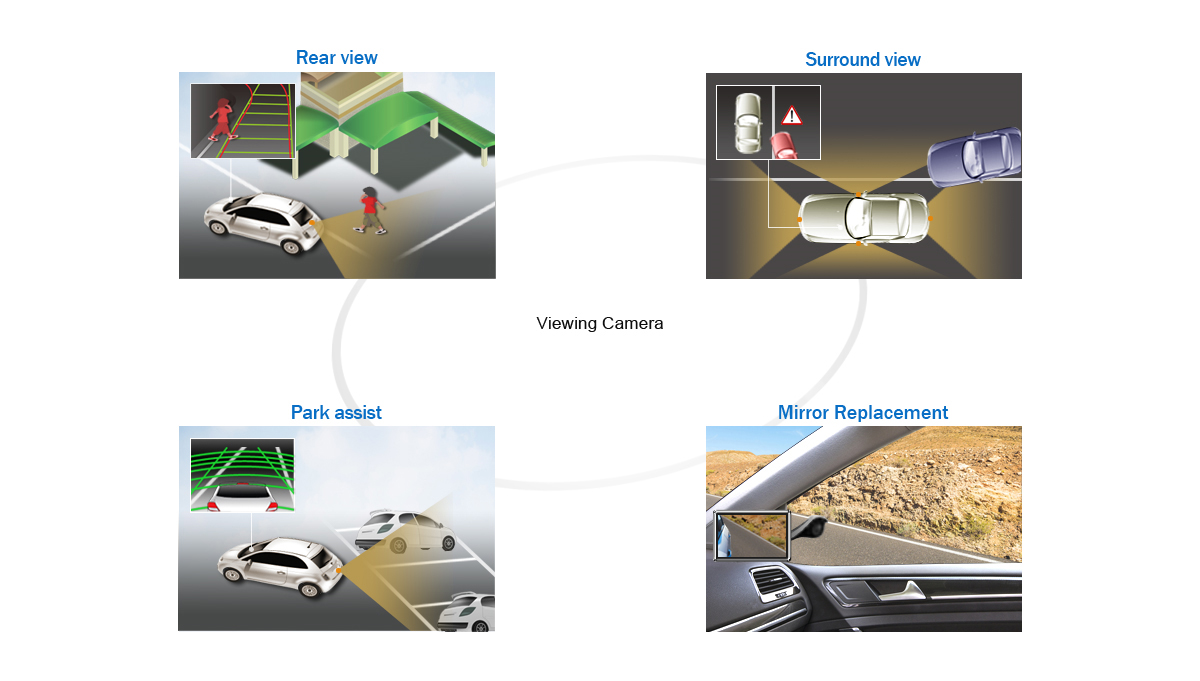

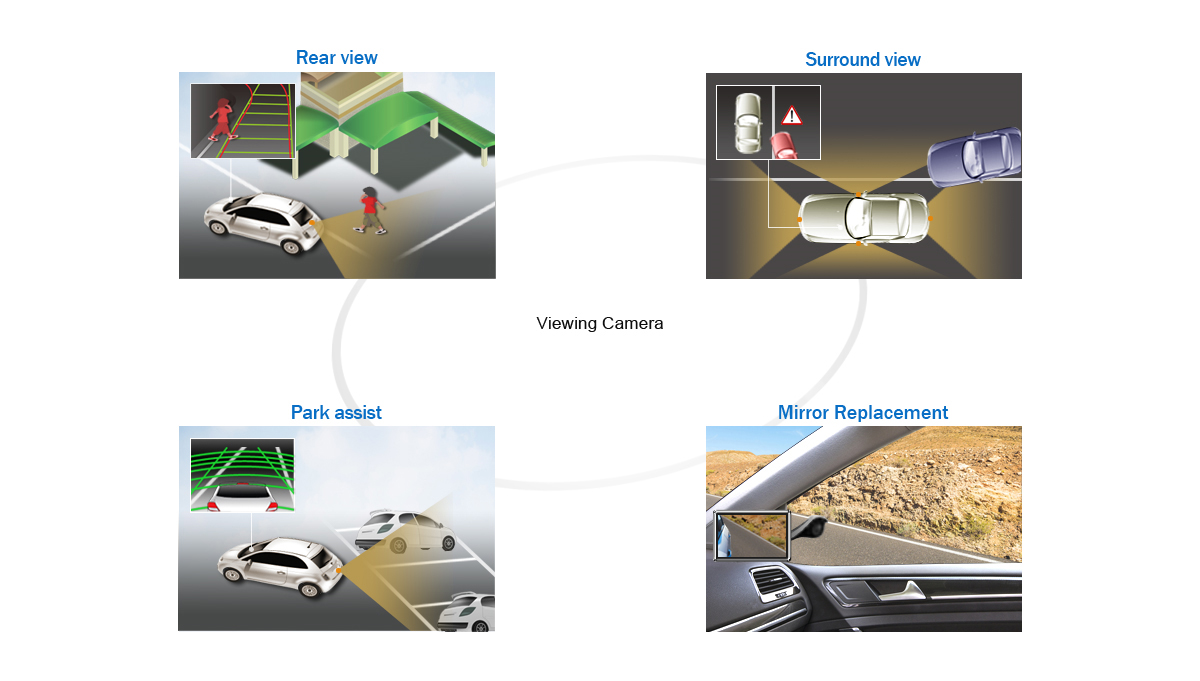

자동차에 탑재된 카메라 모듈의 기초적인 활용례

자율주행차 분야에서 이미지 센서를 활용하려는 움직임이 활발하다. 미국에서는 2018년에 출시되는 자동차에 후방 카메라를 의무적으로 장착하라 지시했다. 후방 카메라는 운전자의 쉬운 주차를 돕는다. 현재 개발된 레벨 3 자율주행차에는 12개의 카메라가 탑재된다. 자율주행차 기술이 레벨 5에 도달하면 더 많은 카메라가 탑재될 것이다.