생성형 AI를 비롯한 초거대언어모델 등 서버와 AI 연산을 가속화하는 초고성능 GPU 시장이 뜨겁게 불타오르고 있다. 시장을 과점한 엔비디아에 대항한 AMD가 신제품 MI300X GPU를 지난 7일 출시한 이후 AMD와 엔비디아 사이에 신경전이 벌어졌다.

[이 기사는 2023년 12월 19일 15시 29분 e4ds+에서 선공개된 기사입니다.]

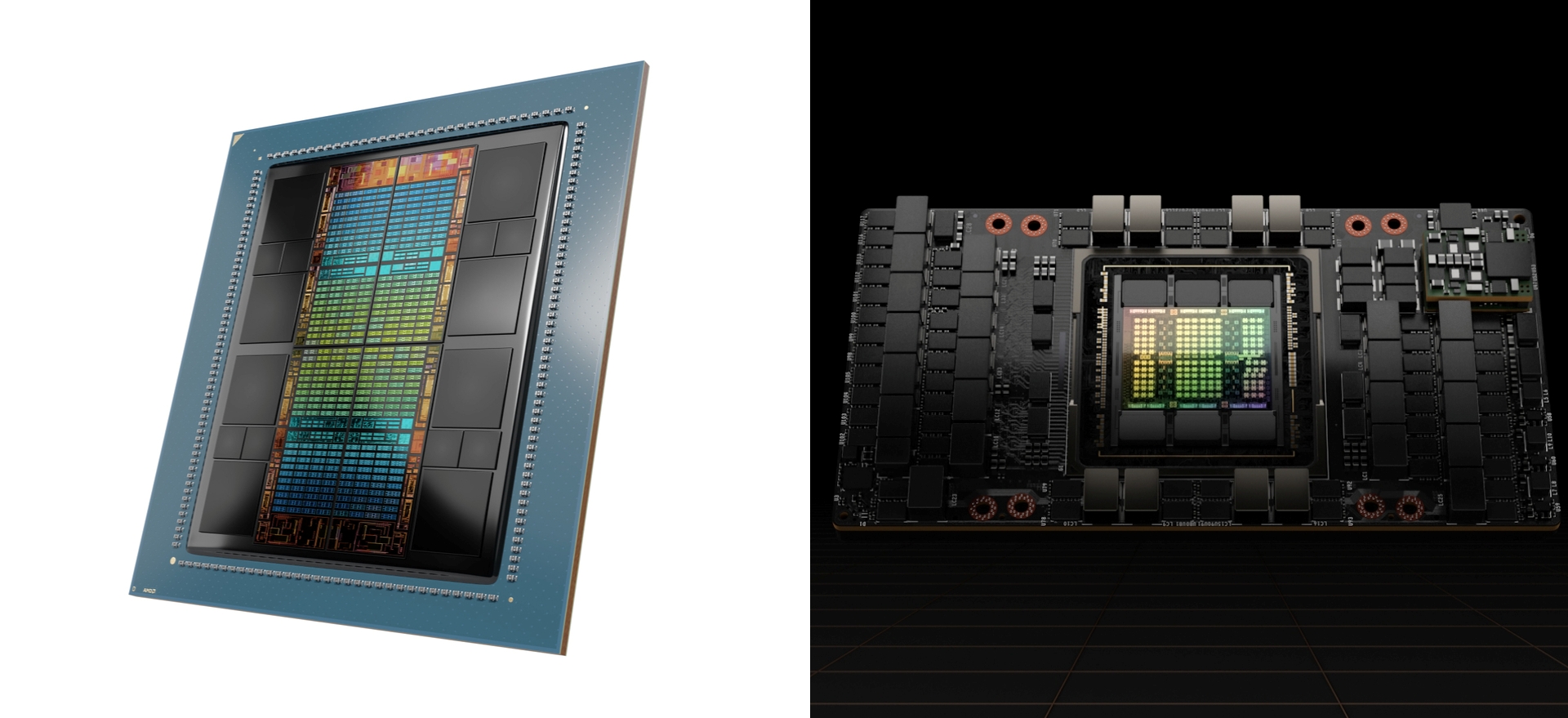

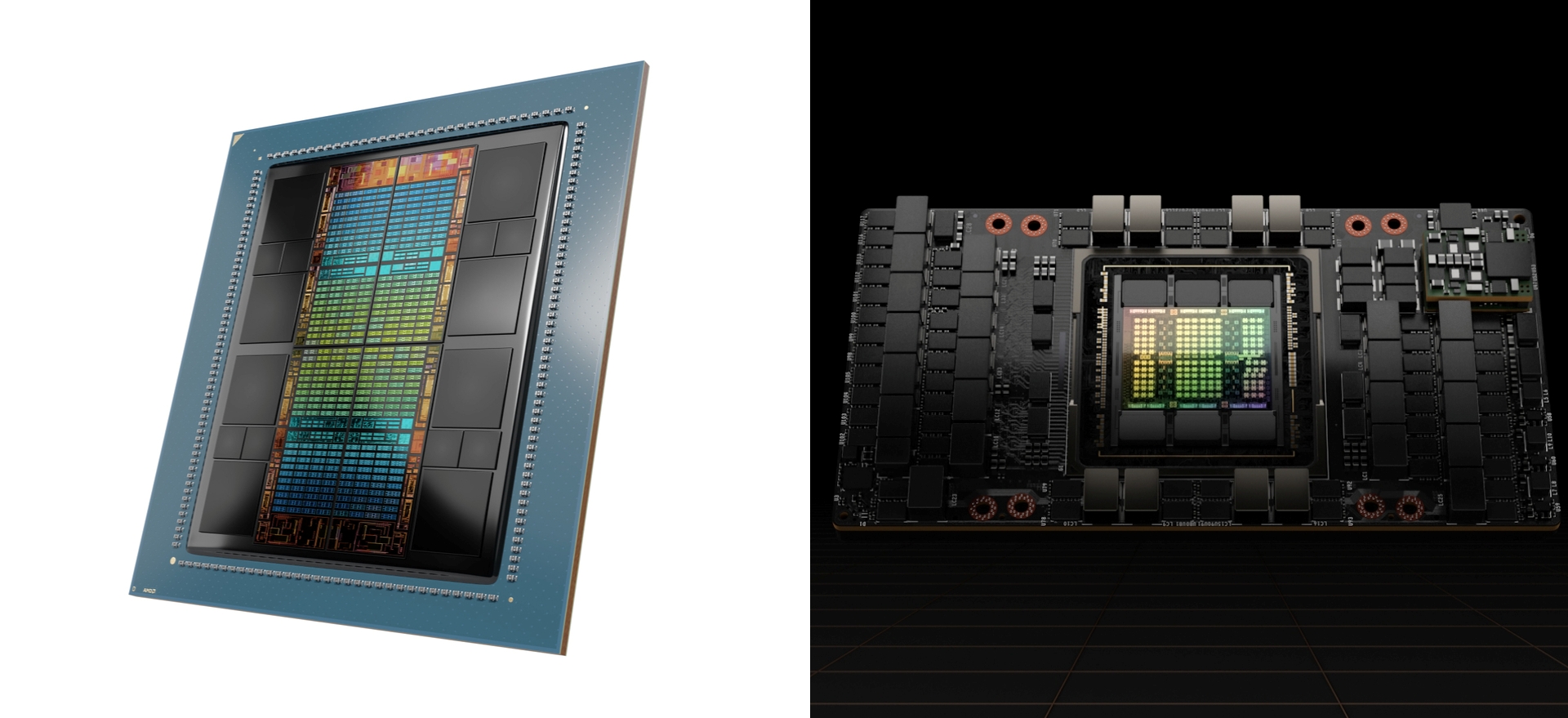

▲AMD MI300X와 엔비디아 H100

엔비디아, “내가 2배 빠르다” vs AMD, “아니다” 재반박

H100 연내 27조원어치 생산...AMD AI 서버칩 시장 확보

엔비디아는 이미 차세대 H200 준비 중, AI칩 경쟁 과열

생성형 AI를 비롯한 초거대언어모델 등 서버와 AI 연산을 가속화하는 초고성능 GPU 시장이 뜨겁게 불타오르고 있다. 시장을 과점한 엔비디아에 대항한 AMD가 신제품 MI300X GPU를 지난 7일 출시한 이후 AMD와 엔비디아 사이에 신경전이 벌어졌다.

■ 엔비디아, “H100이 2배 더 빨라” 반박

AMD가 지난 7일 Advancing AI’ 행사에서 인스팅트(Instinct) MI300 시리즈를 공개했다. 이때 AMD측은 제품 발표에서 MI300X가 단일 GPU에서 H100보다 최대 20% 더 빠른 성능을 제공하며 8개 GPU가 내장된 서버 제품군에서 비교할 땐 최대 60% 더 빠른 성능을 제공한다고 주장했다.

이에 엔비디아는 신속한 반박 자료를 제시하며 대응했다. 14일 엔비디아는 엔비디아 호퍼 아키텍처에 최신 커널 최적화가 포함된 오픈 소스인 엔비디아 텐서RT-LLM이 출시됐고 이러한 최적화를 통해 라마 2 70B 모델의 추론 성능은 AMD MI300X과 비교해 2배 빠른 것으로 봐야 한다고 주장했다.

이는 AMD의 이전 발표가 H100의 최신 최적화 소프트웨어를 사용하지 않아 발생한 결과였다는 게 주된 근거이다.

또한 DGX H100에서 하나의 배치 크기, 즉 한 번에 하나의 추론 요청을 사용해 1.7초 안에 단일 추론 처리가 가능하며 고정된 2.5초 응답시간을 투입하면 8개 DGX H100서버는 초당 5개 이상의 라마 2 70B 추론을 처리할 수 있다고 설명했다.

■ AMD, “최신 최적화로 성능 이점 2.1배로 증가” 재반박

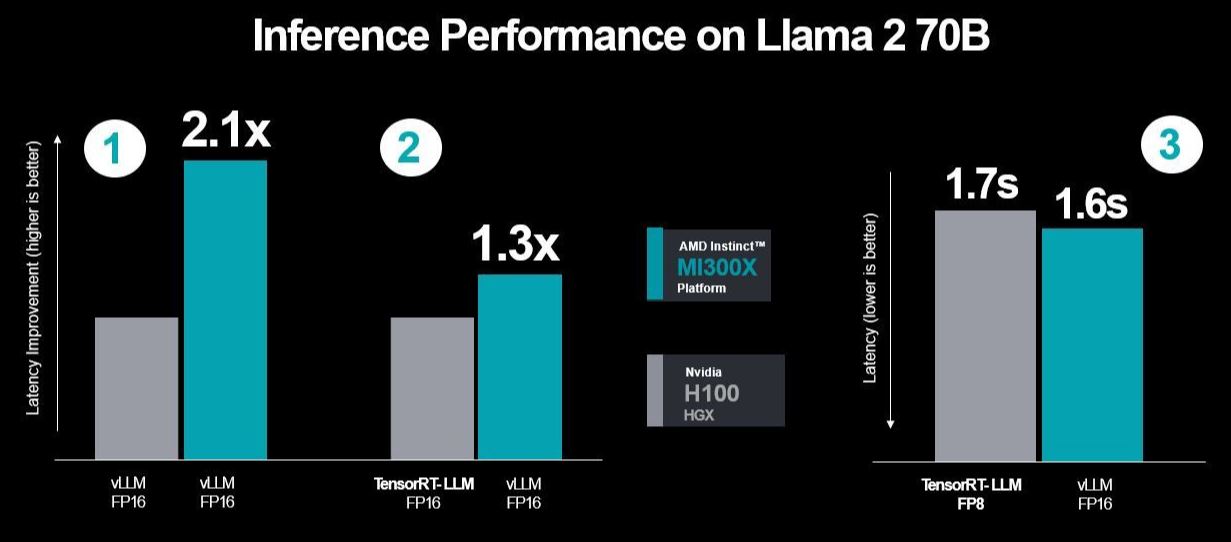

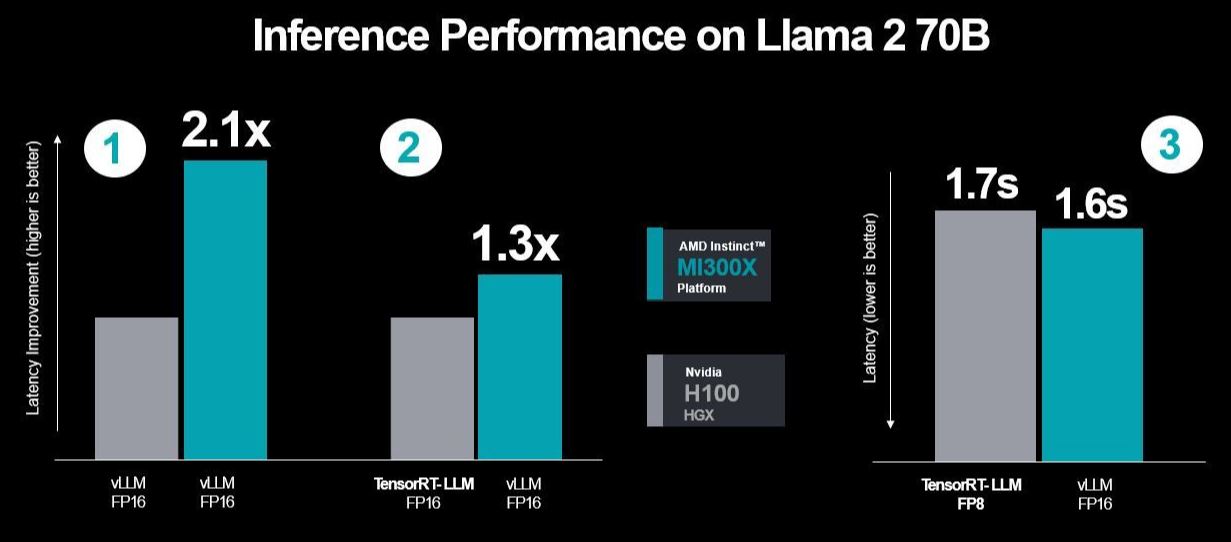

▲AMD가 테스트한 라마 2 70B에서의 H100과 MI300X 추론 성능 비교표(자료:AMD 블로그)

이틀 뒤인 16일 AMD는 다시 재반박 보도자료를 공개했다. AMD는 엔비디아의 주장을 반영한 새로운 벤치마크 테스트 결과를 제시했다.

AMD는 엔비디아 H100에서 텐서RT-LLM를 사용해 새롭게 테스트했으며, MI300X GPU의 FP16 데이터 유형 성능과 H100의 FP8 데이터 유형 성능을 비교했다. 또한 성능 데이터를 상대 대기 시간 수치에서 절대 처리량으로 전환했다.

이러한 기준을 반영해 AMD는 11월 기록된 출시 성능에서 업데이트된 ROCM 소프트웨어의 진전을 통한 최신 성과를 반영한 테스트 결과를 공개했다.

결과적으로 AMD의 테스트 결과는 MI300X가 H100 대비 vLLM 비교에서 최신화된 성능값 반영에 힘입어 2.1배 성능 이점을 달성했다고 밝혔으며, H100 텐서RT-LLM과 MI300X vLLM 비교에서도 지연시간이 1.3배 향상되는 것을 확인할 수 있었다고 주장했다.

하나의 배치 크기에서 라마 2 70B 추론 시간은 H100이 1.7초, MI300X가 1.6초가 나와 성능상 이점을 제공한다고 전했다.

앞선 엔비디아가 하나의 배치 크기에서 단일 추론 처리 성능을 비교할 때와 같은 조건인 2,048개 입력 토큰 및 128개 출력 토큰을 사용해 비교함으로써 성능 검증 논란을 AMD 스스로 정면 돌파하며 재반박에 나선 것이다.

■ 엔비디아 H100, 연내 약 55만대 생산...시장가 총액만 27조원!

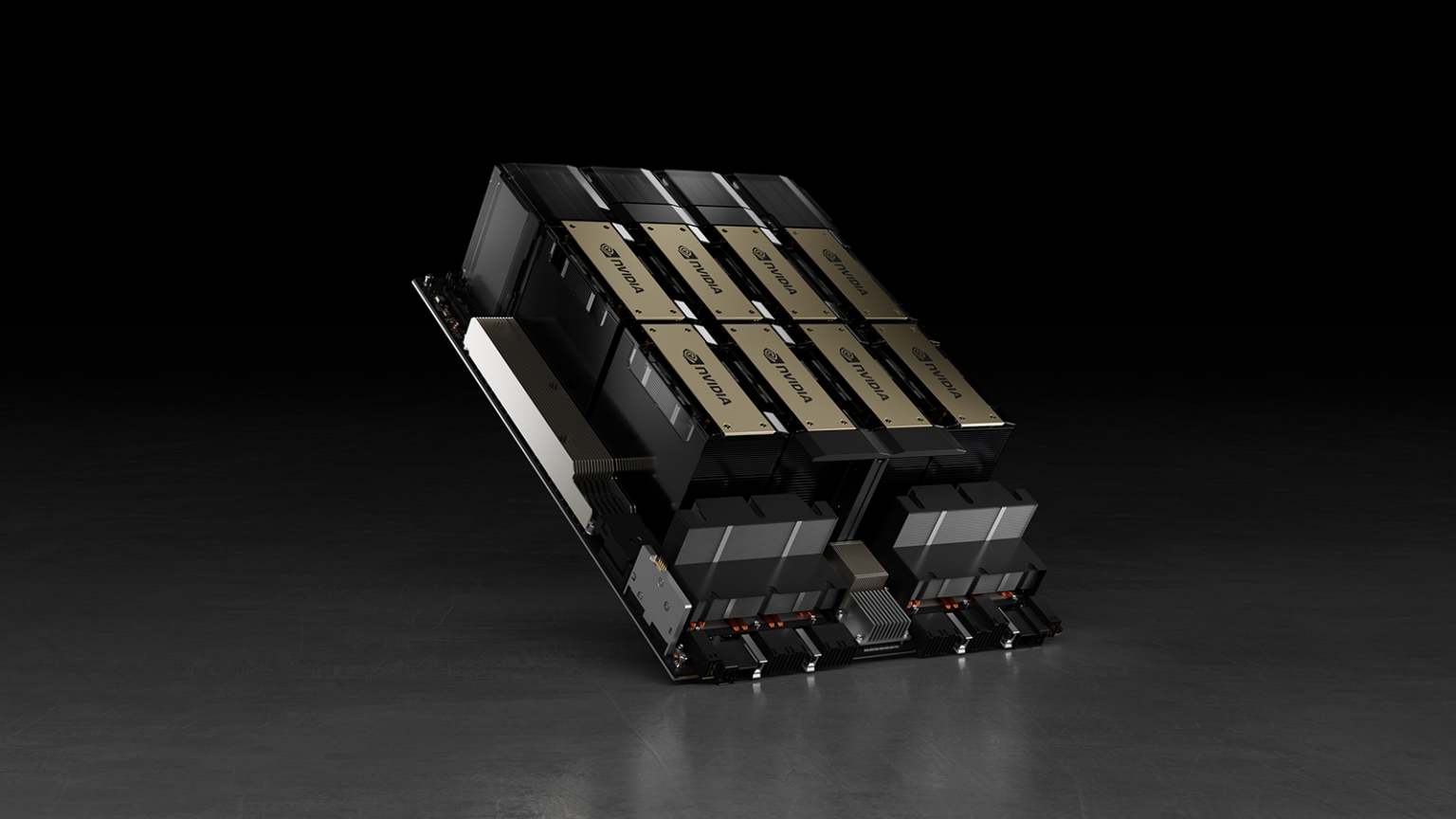

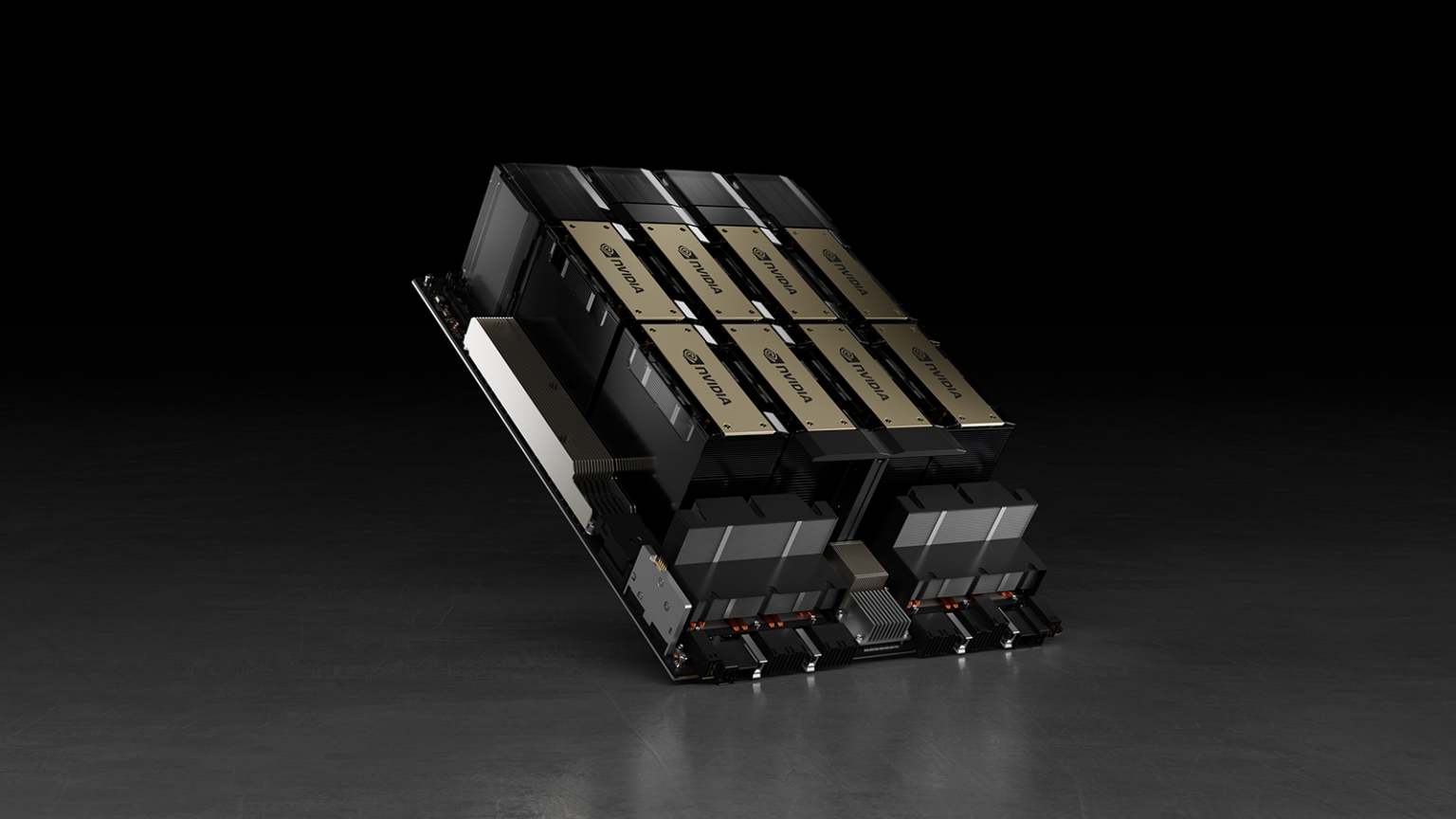

▲엔비디아 H100(이미지:엔비디아)

현재 엔비디아의 H100은 2022년 3월 출시된 제품으로 올해만 55만대 생산돼 시장가 최저 한화로 4,000만원에서 고가일 땐 6,000만원을 호가할 정도로 고가의 고성능 AI 서버칩이다.

올해 생산된 제품의 총액이 시장가의 중간값인 5,000만원으로 어림잡아 계산할 때 27조5,000억원에 달할 정도로 커서, 거대한 시장을 형성하고 있음을 짐작할 수 있게 만드는 규모이다.

엔비디아의 올해 3분기 매출은 181억달러로 한화로 23조원에 달한다.

■ AI칩 시장 호황...AMD 시장 점유 일부 가져갈 듯

서버칩 시장을 90% 과점하고 있는 엔비디아를 견제할 대항마로 AMD가 시장 점유율 쟁탈전에 출사표를 던졌다.

MI300 AI GPU 시리즈는 마이크로소프트, 메타 등이 관심을 갖고 도입을 고려하고 있는 것으로 전해진다.

투자 전문가들도 AMD의 목표주가를 상향하며 AMD의 서버용 GPU 매출이 2024년 가파르게 증가할 것이란 전망을 내놓고 있는 상황이다.

공급보다 수요가 많은 AI 서버칩 업계 요구에 AMD가 필연적으로 수혜를 입을 것으로 예측이 되며, AI 서버칩 부족을 충족할 대체제로 AMD 제품의 성공적인 시장 안착이 기대되기 때문이다.

업계는 H100 등 엔비디아의 AI 서버칩을 웃돈을 주고서도 구하지 못할 정도의 품귀 현상을 보이기에, 빠른 AI 서버 도입을 위해서는 AMD를 선택할 수밖에 없는 경우들이 발생할 것이란 시각이 지배적이다.

■ 2024년 MI300X·A 출시...엔비디아는 H200 출시 예고

AMD MI300X 및 MI300A가 2024년 2분기 시장에 공급될 것으로 예고된 가운데 엔비디아의 차세대 최신 AI GPU인 H200이 H100보다 2배 빠른 성능으로 마찬가지인 2024년 2분기 시장 출시가 예고된 상황이다. AMD 최신 GPU엔 HBM3가 탑재됐지만 엔비디아 H200엔 5세대 HBM3E가 처음으로 탑재된다고 공개해 AI 반도체 성능 경쟁이 본격화될 전망이다.

AMD와 인텔 간 CPU 대전 만큼이나 흥미로운 AMD와 엔비디아 간 GPU 숙명의 라이벌이 AI 서버 시장에서 대격돌하며 치열한 경쟁의 귀추가 주목되고 있다.